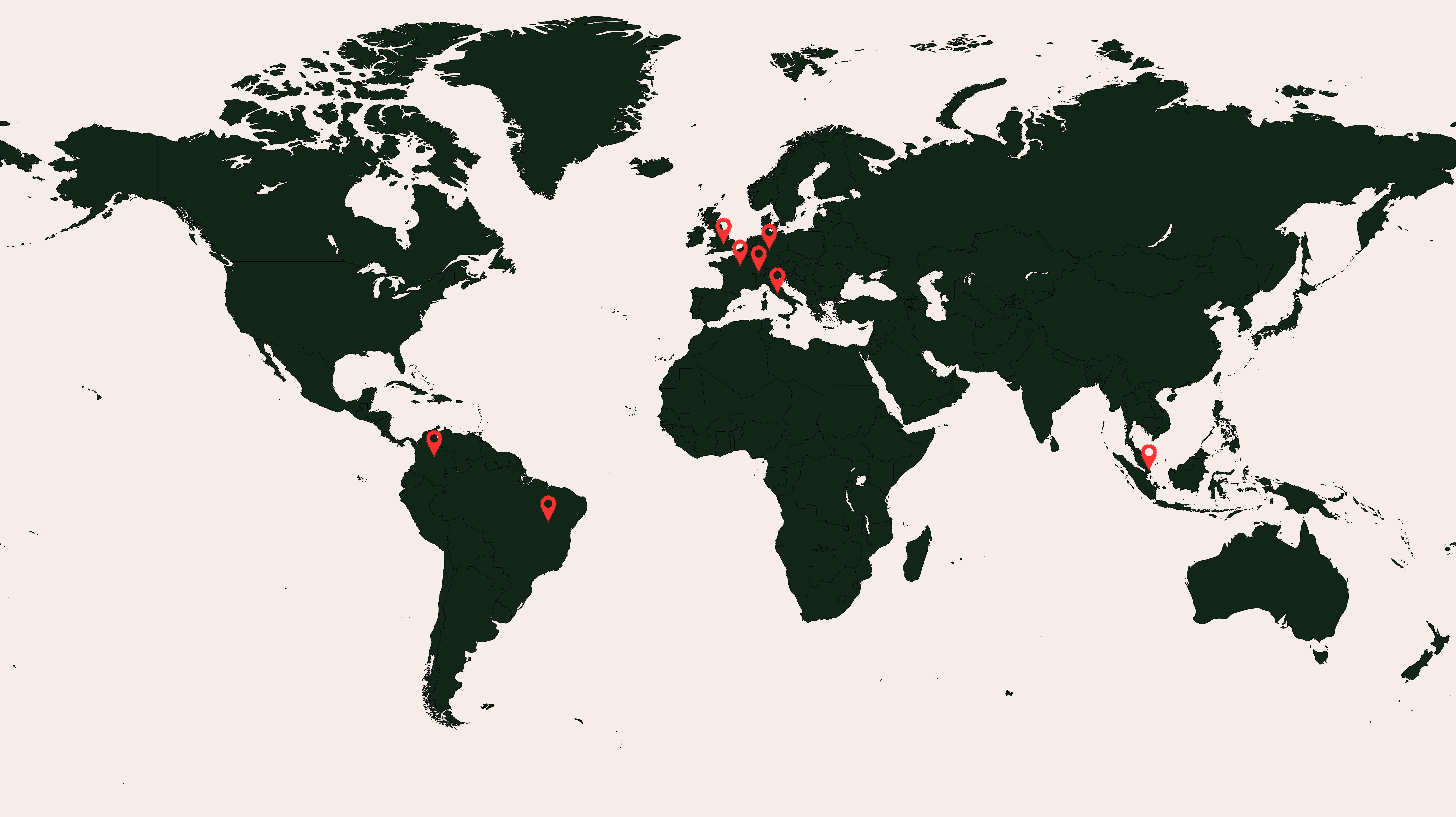

Past bootcamps - from most to least recent

Governance - France - October 2025

Singapore - September 2025

UK - September 2025

Germany - September 2025

Brasil - September 2025

Governance - France - July 2025

Italy - May 2025

Colombia - April 2025

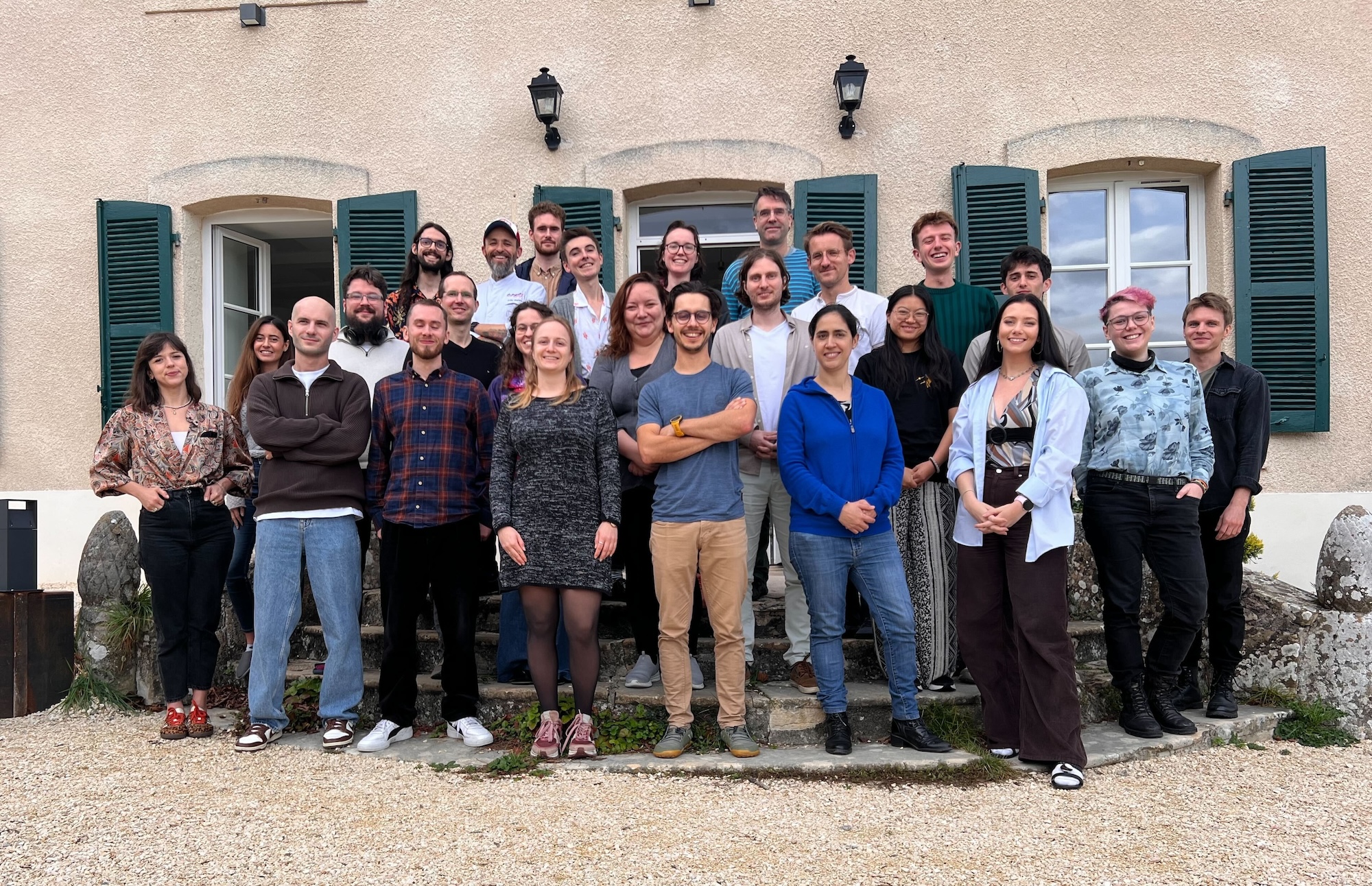

France - March 2025

Germany - October 2024

UK - September 2024

.png)

Brasil - July 2024

France - June 2024

UK - March 2024

Switzerland - September 2023

Germany - August 2023

France - August 2023

Switzerland - February 2023

France - November 2022